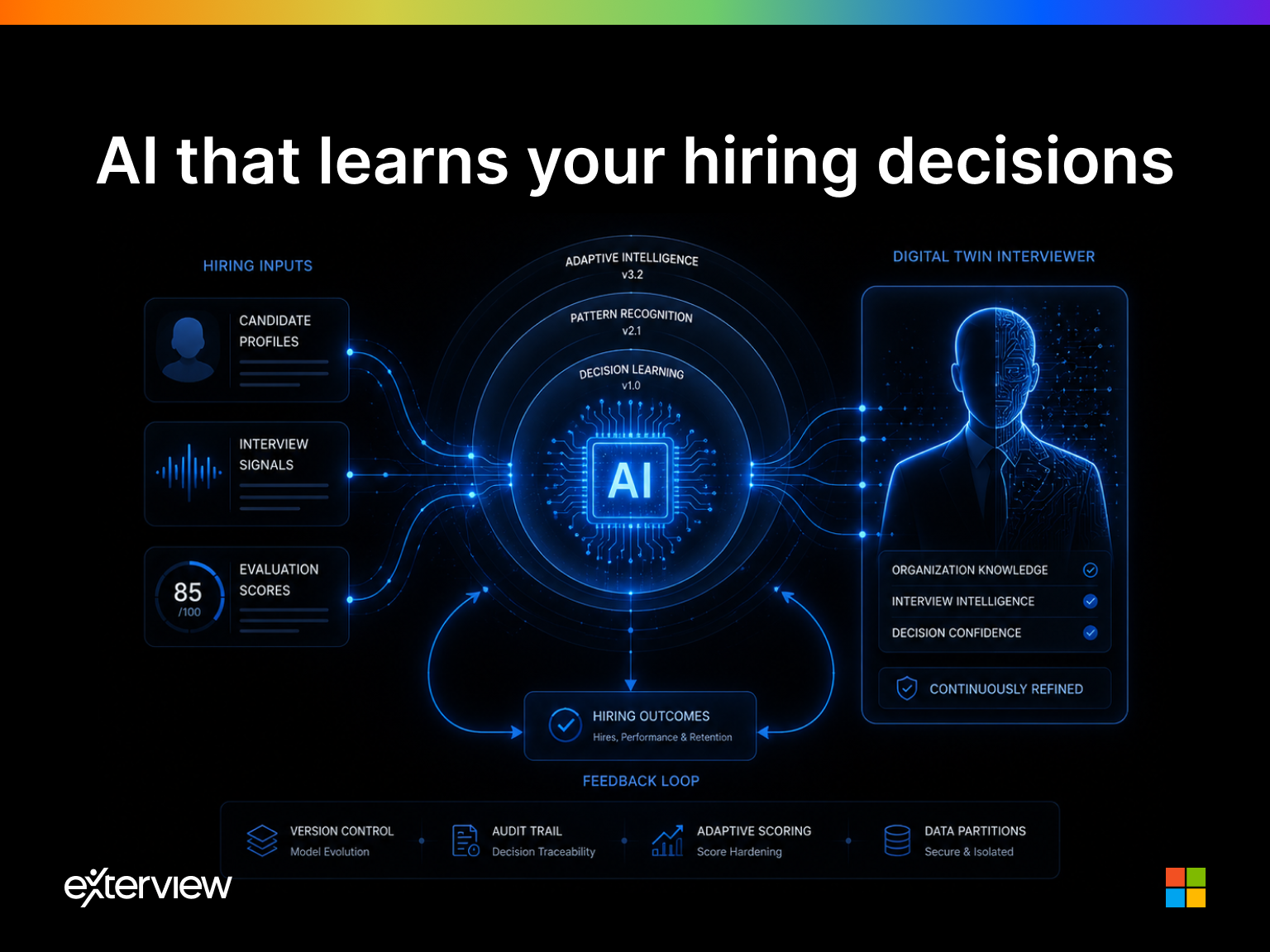

The AI Model That Learns From Your Decisions: Score Hardening and the Digital Twin Interviewer

AI hiring debates focus on deployment, not how AI evolves within organisations over time.

Most conversations about AI in hiring focus on the point of deployment: which model is being used, how it generates questions, whether its outputs are explainable, and what governance framework prevents it from making discriminatory decisions. These are important questions. But they are questions about the beginning of the relationship between an AI system and an organisation, not about what that relationship becomes over time.

The more consequential question is one that most enterprise AI platforms cannot answer: what does the system know about your organisation six months after you deploy it that it did not know on day one? For most platforms, the answer is nothing. The model that evaluates your five-hundredth candidate is operating on exactly the same logic as the model that evaluated your first. It has not learned from your decisions. It has not absorbed the pattern of which candidates succeeded in your environment and which did not. It is still, in every functional sense, a generic tool applied to your specific context, but not shaped by it.

This is the architectural limitation that Score Hardening is designed to solve.

What Score Hardening Actually Is

Score Hardening is a feedback loop architecture that connects hiring outcomes to model behaviour. The mechanism is straightforward in principle, but significant in consequence.

Every time a candidate is evaluated by the AI system, a set of scores is produced: competency scores across defined technical dimensions, behavioural trait scores across a structured profiling framework, and a composite recommendation. These scores are, at the point of generation, a hypothesis. They represent the system's best prediction, given its current configuration, of which candidates are most likely to succeed in the role.

The test of that hypothesis comes later, at the 90-day performance review, at the six-month retention checkpoint, at the point where the hiring manager's assessment of the hire can be compared against the AI's original recommendation. When a candidate who was scored as a strong hire performs well, that confirms a signal. When a candidate who was borderline turns out to be exceptional, that refines one. When a candidate who scored highly underperforms against expectations, that is a recalibration event.

Score Hardening captures these outcome signals and feeds them back into the model's scoring configuration. Specifically: every 20 confirmed positive outcomes, candidates who were recommended, hired, and validated at their 90-day review, the system runs an automated retrospective. It compares the original scores against the actual outcomes, identifies which competency dimensions and behavioural parameters were most predictive of success in this organisation, and proposes a recalibration of the weights and thresholds that govern future scoring.

The recalibration is never applied automatically. It is surfaced to the organisation's HR leadership for review and approval, with a clear explanation of what changed, why, and what the projected impact on future scoring decisions would be. If the HR lead approves, the new configuration takes effect for all subsequent interviews. If they do not, the existing configuration continues unchanged. Historical scores are never retroactively modified. The audit trail is complete.

This is the feedback loop. Outcomes inform weights. Weights shape future evaluations. Future evaluations produce more outcomes. Each cycle makes the system more precisely calibrated to the specific characteristics of successful hires in this organisation.

Why This Is Different from Model Fine-Tuning

It is worth being precise about what Score Hardening is and is not, because the concept is sometimes confused with model fine-tuning, the process of retraining an underlying AI model on new data.

Score Hardening does not retrain the model. The underlying models, the large language models that generate questions, assess responses, and synthesise evaluation reports, are not modified. What is modified is the scoring configuration that those models execute: the weights assigned to each competency dimension, the thresholds that govern how a composite recommendation is generated, the sensitivity of specific behavioural parameters to particular response patterns. The models themselves remain the same. Their instructions become more precise.

The distinction matters for several reasons. Retraining models requires significant computational resources, introduces the risk of catastrophic forgetting, and is difficult to audit or reverse. Recalibrating scoring configurations is lightweight, fully auditable, versioned, and reversible. Every configuration state is stored in the audit log. If a recalibration produces unexpected results, rolling back to a prior version is immediate.

It also matters because the knowledge that is being accumulated is not model knowledge, it is organisational knowledge. The system is not learning what a good hire looks like in general. It is learning what a good hire looks like in this organisation, in this role, with this workforce profile. That specificity is precisely what makes the accumulated intelligence valuable, and it is what makes the Bounded Context architecture necessary.

The Bounded Context Guarantee

In software architecture, a bounded context is a clearly defined boundary within which a particular model of the world applies. Nothing inside the boundary leaks outside it. Nothing outside it affects what happens within.

Exterview applies this principle directly to tenant data. Every hiring outcome, every score recalibration, every configuration update generated within an organisation's deployment is stored within that organisation's isolated tenant context. It is never shared with, transferred to, or used to inform any other organisation's scoring model, regardless of whether those organisations operate in the same industry, hire for the same roles, or are located in the same geography.

This is not a privacy policy. It is a technical architecture enforced at the data layer. Tenant contexts are partitioned at storage level, with dedicated partition keys. No query, retrospective, or recalibration event crosses a tenant boundary. The intelligence that one organisation accumulates through its hiring decisions is exclusively that organisation's asset.

The competitive implication of this architecture is significant. Two organisations deploying the same AI hiring platform, hiring for the same role, in the same market, will, after multiple hardening cycles, have meaningfully different systems. Not because the underlying models differ, but because the scoring configurations have been shaped by different outcome histories. The organisation that has completed more hardening cycles has a more precisely calibrated system. That precision is not available to anyone else. It is not a feature of the platform. It is a property of the organisation's own accumulated decision-making, encoded into the system's configuration.

The Digital Twin Interviewer

The long-term consequence of Score Hardening, run consistently over multiple cycles, is something that deserves its own name: the Digital Twin Interviewer.

A Digital Twin, in engineering contexts, is a dynamic computational model that mirrors a physical system in real time, updating as the physical system changes. In the context of hiring intelligence, the concept applies to a different kind of system: the accumulated judgment of an expert interviewer.

An experienced hiring manager who has been conducting interviews in a specific domain for several years has built up a body of pattern recognition that is extremely difficult to articulate explicitly. They know, from the specific way a candidate describes their approach to a problem, whether that candidate is likely to thrive in this team's operating style. They know which technical signals distinguish genuine competency from surface familiarity in this role. They know which behavioural indicators, in this workforce, correlate with three-year retention versus six-month departure. Much of this knowledge is implicit, it has never been written down, cannot be easily transferred, and is lost when the interviewer leaves the organisation.

Score Hardening is the mechanism by which that implicit knowledge is made explicit, structured, and permanent. After multiple hardening cycles, the AI system's scoring configuration encodes the same pattern recognition that the expert interviewer has developed, not because the system has been trained on the interviewer's preferences, but because it has been trained on the same outcomes that shaped the interviewer's judgment: real candidates, real decisions, real results.

The system does not behave like the expert interviewer because it mimics them. It behaves like them because it has learned from the same evidence they learned from. The difference is that the system's knowledge is version-controlled, auditable, consistent across every interview session regardless of volume or time of day, and permanent, it does not leave the organisation when an individual does.

What This Means for AI Governance

The Score Hardening and Digital Twin architecture raises governance questions that are worth addressing directly.

The first is the question of transparency. If the scoring configuration changes over time, how does the organisation know what the system is currently doing? The answer is: through the approval workflow and the audit log. Every proposed recalibration is surfaced for human review before it takes effect. Every configuration state is versioned and stored. HR leadership always has access to the current configuration, its history, and the outcome data that informed each recalibration event. The system does not operate as a black box, it operates as a documented, version-controlled configuration that can be inspected at any point.

The second is the question of bias amplification. If the system learns from hiring outcomes, and if historical hiring decisions contained biases, could Score Hardening encode and amplify those biases? This is a legitimate concern, and it is addressed through two mechanisms. First, the scoring framework explicitly excludes demographic attributes, gender, age, caste, religion, disability status, from all scoring dimensions. The system learns from outcome signals that are grounded in performance and retention, not in demographic patterns. Second, the human approval requirement at each hardening cycle creates a review checkpoint where HR leadership can evaluate the proposed recalibration for bias patterns before it takes effect.

The third is the question of what happens when the system is wrong. Score Hardening is not designed to produce a perfect system, it is designed to produce a continuously improving one. Recalibrations can be reversed. Prior configurations can be restored. The system's recommendations remain advisory at every stage. No hiring decision is automated. The human judgment of the organisation's HR team remains the final authority.

The Compounding Advantage

The strategic argument for Score Hardening is ultimately an argument about compounding. An AI hiring system that does not learn from outcomes is a tool. Its value is fixed at the point of deployment. It does not improve with use.

A system governed by Score Hardening is an asset. Its value compounds with each hiring cycle. The organisation that deploys it and runs it consistently, feeding outcomes back into the configuration, completing hardening cycles, accumulating institutional knowledge, builds a talent intelligence capability that is not available to organisations that have not made the same investment. The gap between the two widens with every cycle.

This is the architectural difference between AI as a productivity tool and AI as a strategic asset. Productivity tools are interchangeable, any organisation can deploy the same tool and receive roughly the same output. Strategic assets are not interchangeable, they are shaped by the specific history, decisions, and outcomes of the organisation that built them.

Score Hardening, bounded context partitioning, and the Digital Twin Interviewer are the mechanisms by which an AI hiring system crosses that line.