The Death of the Interview as We Know It

Unstructured interviews are unreliable and being replaced by AI-driven evaluation

The Death of the Interview as We Know It

The job interview is one of the oldest rituals in business. It has survived every technological disruption of the last century. tThe telephone, the fax machine, the internet, the smartphone. It has outlasted every productivity revolution. And for most of that time, it has worked approximately as well as a coin flip.

We are not being provocative. We are being precise.

Decades of organizational psychology research have established that the unstructured job interview. The kind where a hiring manager asks questions they made up that morning, listens for forty-five minutes, and follows their gut predicts job performance with a validity coefficient of roughly 0.20. For context, a perfect predictor scores 1.0. A random selector scores 0.0. The average interview scores closer to random than most hiring managers would like to admit.

The question is not whether interviews are broken. The evidence settled that question long ago. The question is: what replaces them and why has it taken this long?

Why the Interview Survived This Long

Broken systems persist when the cost of failure is diffuse and the benefit of change is concentrated. In hiring, bad outcomes, a poor performer, a cultural mismatch, a six-month mis-hire are absorbed across teams, quarters, and budgets. Nobody writes a postmortem on a failed hire the way they write one on a failed product launch. The feedback loop is long and the attribution is murky.

This is why the interview survived. Not because it works. Because the cost of it not working was invisible.

That invisibility is ending. Three forces are making it legible:

Finance is quantifying the cost. McKinsey estimates the average cost of a mis-hire at 30% of annual salary for individual contributors and up to 213% for senior leaders. As companies tighten headcount and demand more from every hire, CFOs are starting to ask the question CHROs have avoided: what is our interview process actually predicting?

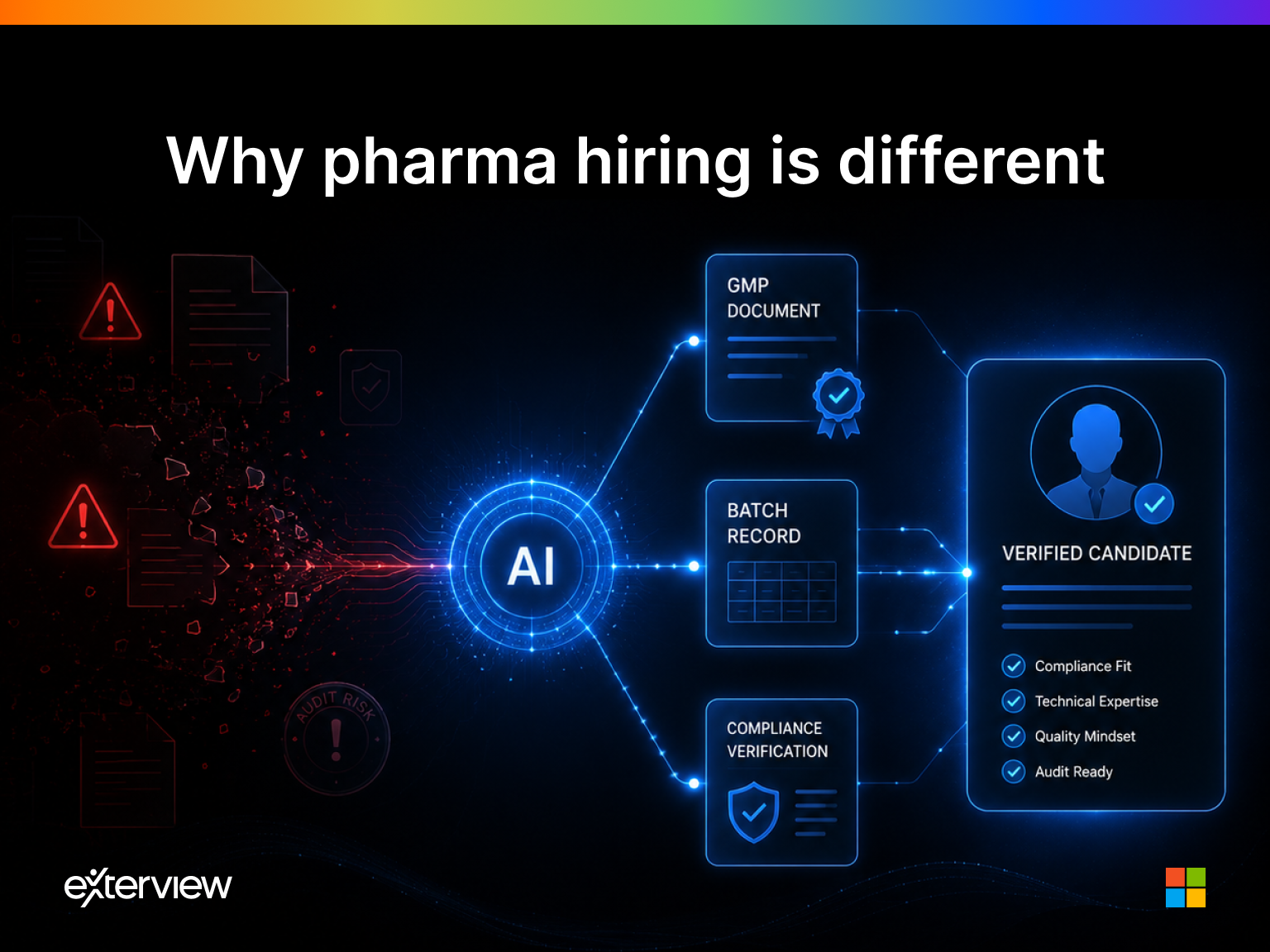

Legal is demanding auditability. The EU AI Act, emerging US state-level hiring regulations, and EEOC enforcement actions are creating compliance pressure around hiring decisions. A process that cannot be explained cannot be defended. Gut-feel interviewing has no audit trail.

AI has made a better system possible. For the first time, the alternative to human intuition is not a rigid rubric or a multiple-choice test. It is a reasoning system one that can conduct a calibrated conversation, score behavioral signals against validated frameworks, detect inconsistencies, and deliver a recommendation that is both accurate and explainable.

What the Research Actually Says

The validity literature on hiring assessments is extensive and largely ignored by practitioners. Here is what it shows:

Unstructured interviews predict performance at 0.20 validity. Structured interviews, where every candidate is asked the same questions, scored against a consistent rubric, and evaluated on defined competencies and predict at 0.51. Work sample tests reach 0.54. Cognitive ability assessments reach 0.51. Combinations of structured methods approach 0.65.

The gap between what most companies do (unstructured conversation) and what the research recommends (structured, multi-signal evaluation) represents an enormous and largely uncaptured value opportunity. Most organizations know this gap exists. Almost none have closed it. Because the operationalization of structured interviewing at enterprise scale requires process discipline that human systems cannot reliably maintain.

This is precisely the problem that agentic AI is built to solve.

The Agent Doesn't Have a Bad Day

The most underappreciated advantage of agentic talent intelligence is not accuracy. It is consistency.

Human interviewers are subject to a catalog of well-documented biases: affinity bias, halo effects, contrast effects, confirmation bias, fatigue effects. A candidate interviewed at 9am on a Monday is evaluated differently than an equivalent candidate interviewed at 4pm on a Friday. A candidate who went to the interviewer's alma mater is evaluated differently than one who did not. None of this is intentional. All of it is measurable.

An agent conducts the 10,000th interview with the same rubric, the same follow-up logic, and the same scoring framework as the first. It does not have a bad day. It does not have a preferred candidate type. It does not make a mental note to hire someone because they reminded it of itself at 27.

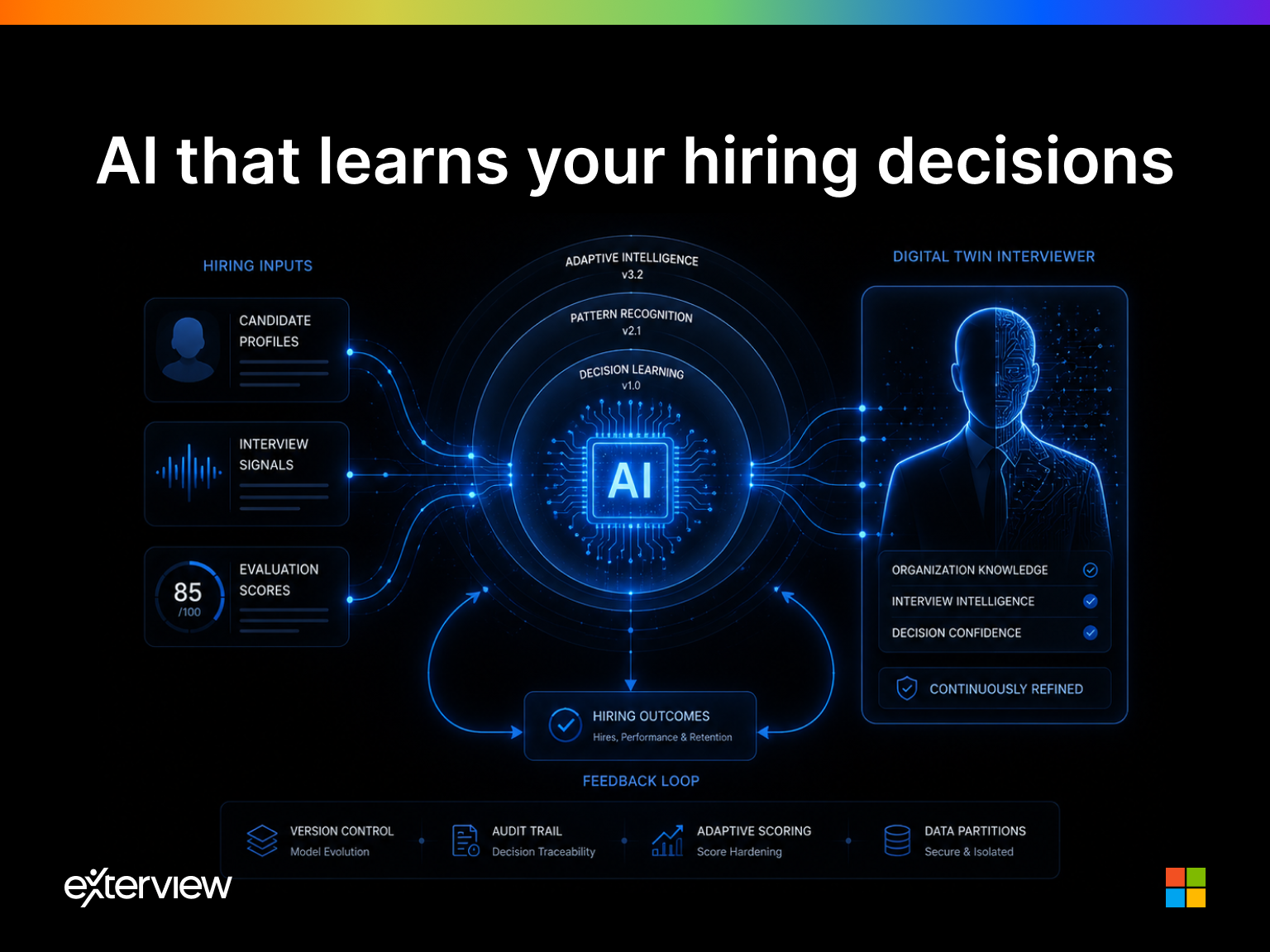

This consistency is not just a fairness argument. It is a performance argument. Consistent evaluation produces comparable signals. Comparable signals produce learnable patterns. Learnable patterns produce better predictions over time. The agentic hiring system gets smarter with every interview it conducts. The human interviewer largely does not.

The Replacement Is Already Happening

The transition from human-led to agent-led evaluation is not a future scenario. It is an active market movement. Enterprise talent acquisition teams are already deploying AI for resume screening, asynchronous video assessment, and technical evaluation. The next step, agentic systems that conduct full-cycle structured interviews autonomously is not a research project. It is a product category in active deployment.

The companies that move first will build two compounding advantages. The first is operational: faster time-to-hire, lower cost-per-hire, higher quality signal at the top of the funnel. The second is strategic: proprietary interview data, scored and structured at scale, that becomes a training corpus for continuously improving prediction models. This is the data moat that ATS vendors have never been able to build, because they stored data without understanding it.

What Survives

The interview is not dying entirely. The ritual of human conversation, the offer discussion, the values alignment, the relationship-building that happens between a candidate and their future manager will remain human. What is dying is the pretense that an unstructured conversation between two strangers reliably predicts whether one of them should hire the other.

The evaluation layer will be agentic. The relationship layer will remain human. And the companies that understand which is which will build hiring systems that are simultaneously more accurate, more defensible, and more humane than anything that exists today.

The interview as we know it is not being replaced. It is being corrected.

Manish is the CEO and Co-founder of Exterview AI, an Agentic Talent Intelligence Platform built for F500 and Global 2000 enterprises. Exterview's nine-agent architecture conducts structured, auditable hiring evaluations at enterprise scale by replacing the broken unstructured interview with calibrated, explainable signal.