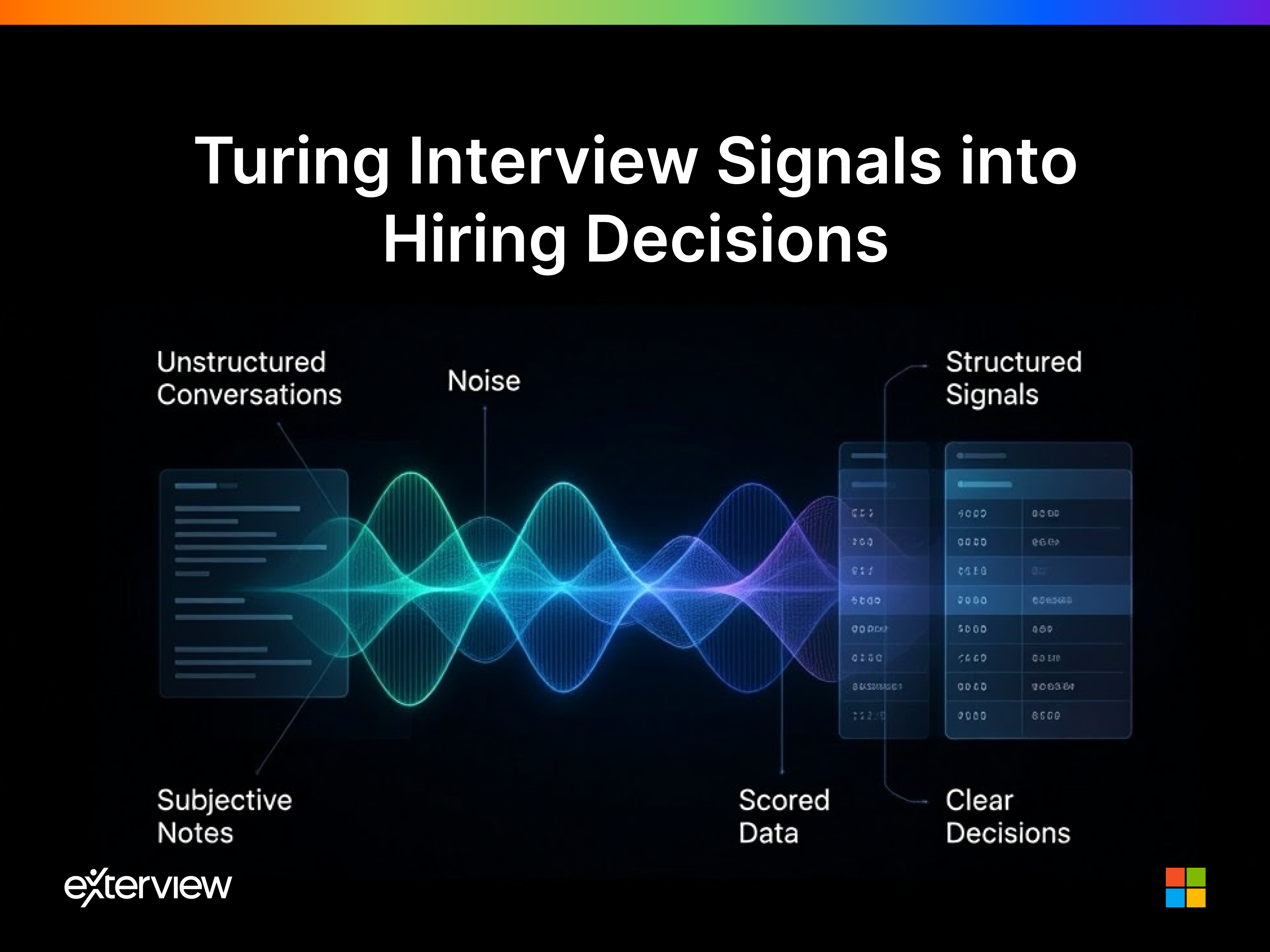

Turning Interview Signals Into Hiring Decisions

How structured validation turns interview signals ino better hiring decisions

The interview loop is complete. Five interviewers have met with three finalists. Feedback has been collected. Now comes the moment that determines whether your hiring process is actually a process, or just an expensive ritual that ends with whoever makes the strongest argument in a debrief room.

Phase 2 is where most enterprise hiring falls apart. Not during screening. Not during sourcing. Right here, at the decision point, where organizations have the most information they will ever have about a candidate and the least systematic way to use it.

The Validation Problem

After a multi-round interview process, a typical hiring team has collected:

- Subjective feedback notes from 4 to 6 interviewers

- Pass/fail or 1-5 ratings with no shared scoring definition

- Conflicting opinions from stakeholders with different priorities

- Gut-feel impressions from a candidate's "vibe" in the room

- And occasionally, if the team is unusually disciplined, a structured scorecard or two

None of this is analyzed. None of it is compared systematically. The hiring decision is made in a 45-minute debrief meeting that is dominated by whoever speaks with the most confidence.

The candidate who gets the offer is frequently the one who made the best impression on the most senior person in the room, not the one whose composite capability score most closely matched the role requirements.

This is not decision-making. This is expensive consensus-building.

What Validation Actually Requires

A genuine validation process does four things that most organizations never systematically implement:

1. Cross-Signal Analysis

Every touchpoint in the hiring process from AI screening to technical assessment to behavioral interview to panel debrief, generates a signal. Validation requires reading these signals together, not in isolation. A candidate who performs brilliantly in a technical screen but struggles with unstructured problem-solving in a panel interview is telling you something specific about their working style. That signal is only visible when you compare across assessments.

2. Candidate Comparison Models

Decisions are comparative, not absolute. You are not asking whether Candidate A is good enough for the role in the abstract. You are asking whether Candidate A is a better fit than Candidate B and Candidate C for this specific role at this specific moment in your organization's development.

Comparison requires structured data. Without comparable signals across all finalists, you are comparing intuitions, not candidates.

3. Confidence Scoring

Not every signal should carry equal weight in a hiring decision. A technical assessment result for a senior engineer role should carry more predictive weight than a 30-minute cultural fit conversation. Validation requires an explicit model of signal weight, one that reflects what actually predicts success in the role.

4. Decision Audit Trails

Enterprise organizations increasingly require documentation that hiring decisions were made on the basis of role-relevant, non-discriminatory criteria. An audit trail is not bureaucratic overhead. It is the evidence that your hiring process is defensible, equitable, and compliant, at every level from recruiter to CHRO to legal counsel.

How Exterview Powers Phase 2

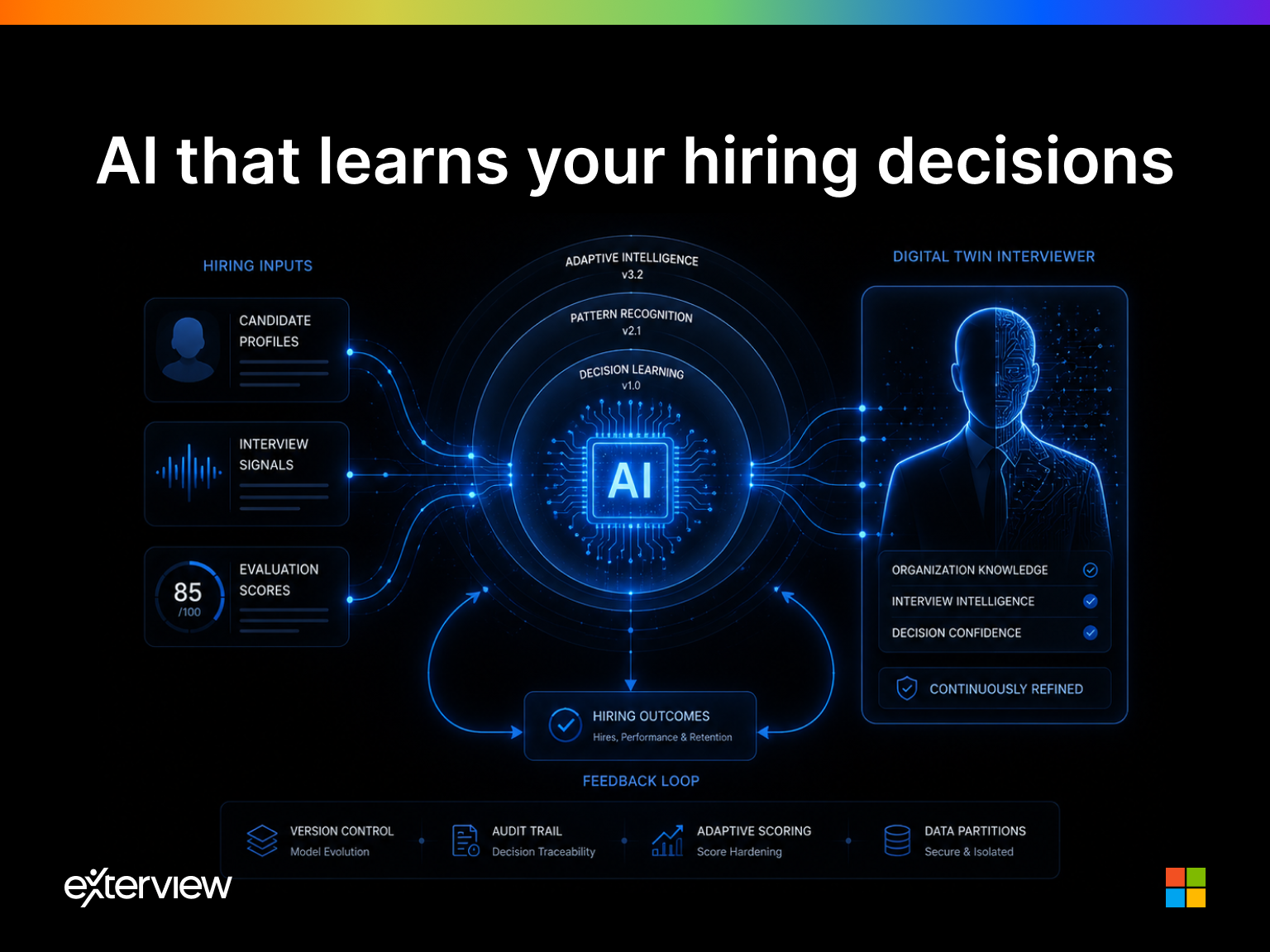

Exterview's Phase 2 architecture is built around a single outcome: transforming interview signals into defensible, high-confidence hiring decisions.

Structured Signal Aggregation

Every evaluation conducted through the Exterview platform, AI screening, technical assessment, structured behavioral interview, panel debrief, generates comparable, structured data. When Phase 2 begins, the Validation layer has access to a complete, standardized evaluation dataset for every finalist candidate.

There are no gaps. No missing scorecards. No "I remember thinking they were strong but I didn't write anything down."

Weighted Scoring Models

Exterview applies role-specific weighting models to each signal type, reflecting the predictive relevance of each assessment to the specific competency requirements of the role. The output is a composite capability score, comparable across all finalists and transparent in its construction.

Comparative Candidate Analysis

The platform generates side-by-side finalist comparisons across every competency dimension that was evaluated. Hiring teams can see, at a glance, where each candidate is strongest, where gaps exist, and how those gaps map to the role's critical requirements.

Debriefs become structured conversations around data, not open debates about impressions.

Decision Audit Logging

Every input that contributed to the hiring recommendation is logged, timestamped, and linked to the evaluation that produced it. The audit trail is complete, exportable, and available for compliance review at any time.

The Outcome: Evidence-Based Hiring

Organizations that implement Phase 2 validation correctly experience a measurable shift in hiring quality, not immediately visible in offer acceptance rates, but consistently visible in 90-day performance outcomes.

When your decisions are built on structured, cross-signal analysis rather than debrief room consensus, you hire the candidates whose capabilities actually match the role, not the candidates whose personalities resonated most strongly with the most opinionated evaluator in the room.

This is the difference between hiring that feels good in the moment and hiring that produces results three months later.

The interview generates the signal. Validation transforms that signal into a decision you can defend, replicate, and learn from. Exterview makes Phase 2 the most rigorous, and most efficient, stage of your entire hiring process.

Your ATS records what decision was made. Exterview records why.